Production RAG Stack on Kubernetes: Reference Architecture (2026)

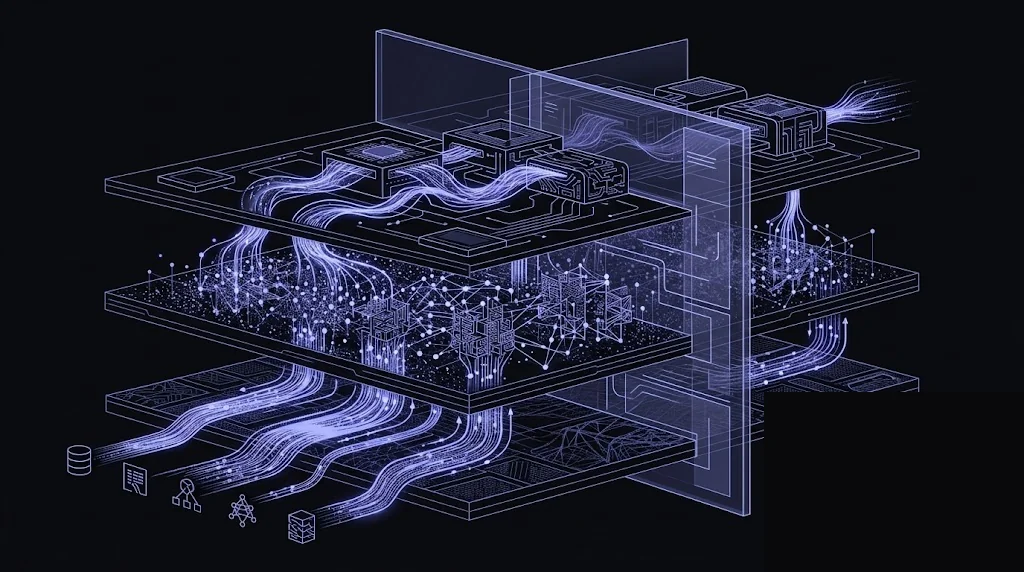

End-to-end production RAG architecture on Kubernetes: ingestion pipeline, embedding and vector search with Qdrant, LLM generation via LiteLLM gateway, Langfuse observability, and GCC-sovereign deployment patterns across EKS, AKS, and Core42.

A production RAG system has many failure modes, and most of them come from treating it as a single application instead of a distributed stack. This post is the canonical reference architecture we deploy for clients running RAG on Kubernetes - each layer has its own deployment guide; this post is the map that puts them together.

If you’ve read the Langfuse, Qdrant, and LiteLLM guides, this post is how they fit together. If you haven’t, start here for the overview and dive into the component guides next.

The six layers

A RAG stack on Kubernetes has six concerns. Skipping any of them pushes complexity into your application code, where it calcifies.

┌──────────────────────┐

│ Client App / UI │

└──────────┬───────────┘

│ /chat (OpenAI-compatible API)

▼

┌──────────────────────┐

Layer 6 │ Orchestration Service│

Orchestration │ (LangGraph / │

│ LlamaIndex / │

│ custom FastAPI) │

└─┬─────────────┬──────┘

│ │

retrieve ◀─────┘ └─────▶ generate

│ │

▼ ▼

Layer 5 ┌──────────┐ ┌──────────────┐ Layer 3

Retrieval │ Vector DB│ │ LLM Gateway │ Gateway

│ (Qdrant /│ │ (LiteLLM) │

│ Milvus) │ └──┬───────────┘

└────┬─────┘ │

▲ ┌────────────┴────────────┐

│ ▼ ▼

Layer 4 ┌───────────┐ ┌──────────────┐ ┌──────────────┐

Embedding │ Embedding │ │Azure OpenAI │ │ Self-hosted │

│ Service │ │(UAE North) │ │ vLLM / TGI │

│ (TEI) │ │Bedrock (BHR) │ │ on GPU pool │

└─────▲─────┘ └──────────────┘ └──────────────┘

│

Layer 2 ┌─────┴─────┐

Ingestion │ Ingestion │

│ Workers │

│ (chunking,│

│ cleanup) │

└─────▲─────┘

│

Layer 1 ┌─────┴─────┐

Sources │S3/SharePt/│

│Confluence │

└───────────┘

Layer 7 (cross-cutting): Langfuse ◀── traces, evals, costs from every layer above

Each layer’s design choices are independent but not unrelated. Let’s walk through them.

Layer 1 and 2: Ingestion

This is the layer that makes or breaks retrieval quality. Chunking, cleaning, and metadata extraction matter more than the vector DB you pick.

Deployment pattern:

apiVersion: apps/v1

kind: Deployment

metadata:

name: rag-ingestion-worker

namespace: rag-platform

spec:

replicas: 2

selector:

matchLabels: {app: rag-ingestion-worker}

template:

metadata:

labels: {app: rag-ingestion-worker}

spec:

containers:

- name: worker

image: registry.example.ae/rag/ingestion:v1.4.2

env:

- name: SOURCE_QUEUE

value: "redis://redis.rag-platform/0"

- name: VECTOR_DB_URL

value: "http://qdrant.vectordb.svc.cluster.local:6333"

- name: EMBEDDING_URL

value: "http://tei.embeddings.svc.cluster.local:80"

resources:

requests: {cpu: 500m, memory: 1Gi}

limits: {memory: 4Gi}

---

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: rag-ingestion-worker

namespace: rag-platform

spec:

scaleTargetRef: {name: rag-ingestion-worker}

minReplicaCount: 2

maxReplicaCount: 50

triggers:

- type: redis

metadata:

address: redis.rag-platform:6379

listName: "ingest:pending"

listLength: "500"

Ingestion patterns we recommend:

- Queue-driven, not cron-driven. Documents land on a Redis/Kafka queue when the source emits change events. Workers consume.

- Idempotent. Use the source system’s content hash as the vector DB point ID so re-ingesting the same doc replaces rather than duplicates.

- Parent-child chunking. Store child chunks (512-1024 tokens) with a reference to the parent chunk (2048-4096 tokens). Retrieve on child, generate on parent.

- Rich metadata. Every chunk gets source URL, last-modified, access-control group, language, and section heading as payload fields. Filtering on metadata is usually cheaper than expanding

kat retrieval time. - Tenant isolation baked into the payload.

tenant_idas a required filter on every query prevents cross-tenant leakage.

Layer 3: Embedding service

Two viable choices: managed (Azure OpenAI text-embedding-3-large, Cohere, Bedrock) or self-hosted (Hugging Face Text Embeddings Inference, or a TEI-equivalent).

Self-hosted TEI on GPU is the right answer when:

- Embedding volume is high enough that managed cost exceeds ~$3k/month

- Data sovereignty forbids sending content to managed endpoints at ingest time

- You need a specific embedding model (e.g., multilingual-e5, Arabic-optimized models) not available via managed providers

apiVersion: apps/v1

kind: Deployment

metadata:

name: tei

namespace: embeddings

spec:

replicas: 2

template:

spec:

nodeSelector:

node.kubernetes.io/gpu-family: "L4"

tolerations:

- key: "nvidia.com/gpu"

operator: Exists

containers:

- name: tei

image: ghcr.io/huggingface/text-embeddings-inference:cuda-1.5

args:

- "--model-id=BAAI/bge-m3"

- "--max-batch-tokens=16384"

- "--pooling=cls"

resources:

requests: {cpu: 4, memory: 16Gi, "nvidia.com/gpu": 1}

limits: {memory: 16Gi, "nvidia.com/gpu": 1}

ports:

- containerPort: 80

Pin to cheap inference GPUs (L4, T4, A10) not training cards. A single L4 handles roughly 8,000-15,000 embeddings/sec for a 768-dim BGE model - enough for most enterprise RAG ingest.

Layer 4: Vector database

Covered in depth in the Qdrant on Kubernetes guide. Key decisions at the stack level:

- One collection per logical corpus, not per tenant. Use payload filtering (

tenant_id) for tenant isolation. Per-tenant collections explode operational complexity above ~50 tenants. - Shard count ≥ 2× peer count so rebalancing has headroom.

replication_factor: 2as the default;3for availability-critical workloads.- Quantization above 50M vectors. Scalar int8 is the sweet spot - 4x memory reduction for ~1% recall loss.

Layer 5: LLM gateway

Covered in LiteLLM on Kubernetes. At the stack level, the gateway is where you centralize:

- Provider keys (never inline them in orchestration code)

- Virtual keys per tenant or feature

- Per-tenant budgets and rate limits

- Provider fallback topology (primary: Azure UAE, fallback: Bedrock Bahrain)

- Cost attribution data for finance

One proxy fleet serves all downstream services. Don’t deploy one LiteLLM per team - the whole point is to centralize.

Layer 6: Orchestration service

This is the layer that owns your RAG logic. Keep it thin, explicit, and horizontally scalable. A minimal production skeleton:

# orchestrator/rag.py

from fastapi import FastAPI, Depends

from pydantic import BaseModel

from qdrant_client import AsyncQdrantClient

from openai import AsyncOpenAI

app = FastAPI()

qdrant = AsyncQdrantClient(url=QDRANT_URL, api_key=QDRANT_API_KEY)

llm = AsyncOpenAI(base_url=LITELLM_URL, api_key=LITELLM_KEY)

class ChatRequest(BaseModel):

message: str

tenant_id: str

session_id: str

user_id: str

@app.post("/v1/chat")

async def chat(req: ChatRequest):

# 1. Embed the query

q_embedding = await embed(req.message)

# 2. Retrieve with tenant filter

hits = await qdrant.search(

collection_name="documents",

query_vector=q_embedding,

query_filter={

"must": [{"key": "tenant_id", "match": {"value": req.tenant_id}}]

},

limit=20,

with_payload=True,

search_params={"exact": False, "hnsw_ef": 128}

)

# 3. Rerank (optional) and prompt assembly

context = assemble_context(hits[:8])

# 4. Generate with LiteLLM (carries Langfuse trace metadata)

resp = await llm.chat.completions.create(

model="gpt-4o-uae-primary",

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": f"{context}\n\nQuestion: {req.message}"}

],

extra_body={"metadata": {

"trace_user_id": req.user_id,

"session_id": req.session_id,

"tags": ["rag", "prod"],

"generation_name": "rag-answer"

}}

)

return {"answer": resp.choices[0].message.content}

Deploy it like any stateless service: 3+ replicas, HPA on CPU or RPS, topology-spread across zones, PDB with minAvailable: 2.

Frameworks like LangGraph and LlamaIndex are fine - treat them as libraries, not deployment patterns. The deployment topology is yours.

Layer 7: Observability

Langfuse owns the observability plane. Every trace from the orchestrator and every LLM call via LiteLLM lands in Langfuse. Build these dashboards on day one:

- Retrieval quality - context precision (via LLM-as-judge on sampled traces), hit@k for known queries

- End-to-end latency - p50/p95/p99 broken down by stage: embed, retrieve, rerank, generate

- Cost per answer - tokens × provider rate, rolled up by tenant and feature

- Error rate - 4xx (client), 5xx (gateway), provider failures, retrieval empty results

- Eval drift - Ragas faithfulness and answer-relevance scores over time on a rolling sample

Without this, you’re shipping RAG blind.

Cluster topology: namespaces and isolation

We deploy the stack across a handful of namespaces with explicit boundaries:

| Namespace | Contents | Network exposure |

|---|---|---|

rag-platform | Orchestration service, ingestion workers | Ingress + egress to data, embeddings, vectordb, llm-gateway |

embeddings | TEI GPU pods | Internal only; accessed from rag-platform |

vectordb | Qdrant cluster | Internal only; accessed from rag-platform |

llm-gateway | LiteLLM proxy + Postgres/Redis | Internal + egress to provider IPs |

observability | Langfuse, Prometheus, Grafana | Internal; Ingress for humans |

data | Postgres clusters, Redis, Kafka | Internal only |

ingress-nginx | Shared ingress controller | External LB |

Default-deny NetworkPolicy in every namespace; allow rules explicit. This is the configuration auditors like.

Capacity planning at the stack level

The simplest planning model:

- Start from target RAG QPS (requests per second at steady state).

- Multiply by 3 for peak (we typically size for 3x p50 traffic).

- For each layer, compute capacity from that peak:

- Orchestrator: ~50 QPS per 2-vCPU replica. Add 30% headroom for HPA reaction.

- Vector DB: depends on collection size and

ef. A 3-peer Qdrant atef=128on r6i.xlarge does ~300 QPS for 10M vectors. - Embedding (if queried per request): ~1,000 QPS per L4 GPU at 768 dim. Queries that share embedding across reranking cost more.

- LLM gateway: ~500 RPS per 1-vCPU pod. Gateway is usually not the bottleneck; providers are.

- Langfuse ingestion: 1 worker per 10k events/sec; size with 50% headroom.

Example: a customer-support RAG at 50 QPS steady, 150 QPS peak, 20M vectors:

- Orchestrator: 4 × 2 vCPU (4 × 50 = 200 QPS capacity, plenty of headroom)

- Qdrant: 3 × r6i.xlarge with int8 quantization

- TEI: 2 × L4 (one per embedding request, 2k QPS)

- LiteLLM: 3 × 1 vCPU

- Langfuse: small tier (~5M events/month from this volume)

Steady-state cost (EKS me-central-1, excluding LLM tokens): ~32,000 AED/month. LLM tokens will be another 20-80k AED depending on response length and model choice.

GCC data sovereignty blueprint

For NESA / CBUAE / ADGM workloads, here’s the exact configuration we ship:

┌─────────────────────────────────────────────────────────────────┐

│ Azure UAE North | AWS Middle East (UAE or Bahrain) │

├─────────────────────────────────────────────────────────────────┤

│ - AKS / EKS cluster in-region │

│ - Private endpoints only; no public cluster API │

│ - Customer-managed KMS keys for EBS, S3, ACR/ECR │

│ - Workload identity federated to client's Entra ID │

│ │

│ Embedding: Azure OpenAI UAE North text-embedding-3-large │

│ OR self-hosted TEI on in-region L4 pool │

│ │

│ Generation: Azure OpenAI UAE North gpt-4o (primary) │

│ Bedrock Bahrain claude-3.5-sonnet (fallback) │

│ Cross-region fallback only if data-sharing DPA │

│ explicitly permits; otherwise remove fallback. │

│ │

│ Vector DB: Qdrant self-hosted in-cluster │

│ Gateway: LiteLLM self-hosted in-cluster │

│ Observability: Langfuse self-hosted in-cluster │

│ Secrets: Azure Key Vault / AWS Secrets Manager in-region │

│ Backups: Same-region S3/Blob with CMK, cross-region disabled │

└─────────────────────────────────────────────────────────────────┘

Common questions we get from regulators:

- “Where does the embedding model run?” - In-region, either Azure OpenAI UAE North or self-hosted GPU.

- “Can the LLM provider train on our data?” - Azure OpenAI and Bedrock offer zero-retention/zero-training commitments; sign the DPA and configure the deployment accordingly.

- “What happens if the primary provider is down?” - Regulated fallback goes to another in-region provider only. Out-of-region fallback requires explicit approval.

- “Who has access to Langfuse traces?” - RBAC in Langfuse, SSO via the client’s IdP, audit log to their SIEM.

Deployment sequencing

Stand the stack up in this order. Skipping steps creates compounding outages.

- Cluster baseline - ingress-nginx, cert-manager, external-secrets, KEDA, prometheus-operator, metrics-server.

- Data tier - Postgres (CloudNativePG), Redis (Operator), ClickHouse if Langfuse self-hosted.

- Langfuse - deploy early so every later step gets traces from day one. Our Langfuse guide.

- Vector DB - Qdrant cluster and a test collection. Our Qdrant guide.

- Embedding service - TEI with a tiny model first, then swap in your real embedder once the pipeline works.

- LLM gateway - LiteLLM with one provider, one virtual key. Our LiteLLM guide.

- Orchestration service - dumbest possible retrieve-generate loop against a 10-doc test corpus.

- Ingestion pipeline - wire up one real source, one-way, watch it flow through.

- Eval harness - deploy Ragas or equivalent, wire up LLM-as-judge, start tracking baselines.

- Harden - NetworkPolicies, PDBs, autoscaling limits, alerts, runbooks, DR drill.

Expect 3-4 weeks with a competent platform team. Double that if you’re new to Kubernetes.

Anti-patterns we see all the time

- RAG logic embedded in the application monolith. Moves too slowly, can’t A/B test retrieval strategies, couples app releases to RAG changes. Move orchestration out.

- One big pod that embeds, retrieves, and generates. Each layer has different resource needs and scaling curves. Separate them.

- Vector DB as the single source of truth for documents. Treat the vector DB as an index, not storage. Keep originals in S3 / SharePoint / Confluence.

- No observability until after the first quality incident. Langfuse is the cheapest component of the stack. Deploy it first.

- Hardcoded provider keys in application code. Centralize via LiteLLM. Rotate without app releases.

- Ignoring reranking. Bi-encoder retrieval + cross-encoder rerank outperforms bi-encoder alone on almost every real corpus. Budget for it.

- Skipping tenant-ID filters “because we only have one tenant today.” Add the field at ingestion from day one; retrofitting it later is painful.

Related reading

Component deep dives referenced above:

- Deploy Langfuse on Kubernetes: Production Self-Hosted Guide

- Deploy Qdrant on Kubernetes: Production HA Guide

- Deploy LiteLLM Proxy on Kubernetes: Enterprise LLM Gateway

Coming up in this series:

- Deploy Dify on Kubernetes: self-hosted AI application platform

- vLLM vs TGI vs Triton: self-hosted LLM serving benchmark

- Ragas + LangWatch on Kubernetes: continuous RAG evaluation

Getting help

NomadX deploys this reference architecture for enterprise AI teams across the GCC - including regulated industries (fintech, government, healthcare) that need the full sovereignty blueprint. Typical engagement: 4-8 weeks from kickoff to production RAG serving real users. AI/ML Infrastructure on Kubernetes is the starting point.

Frequently Asked Questions

What does a production RAG stack on Kubernetes actually include?

A production-grade RAG system has six layers: (1) an ingestion pipeline that chunks and normalizes source documents, (2) an embedding service that converts chunks to vectors - typically a self-hosted text-embeddings-inference or OpenAI/Azure endpoint, (3) a vector database like Qdrant or Milvus for retrieval, (4) an LLM gateway like LiteLLM for provider routing and policy, (5) an orchestration layer (LangGraph, LlamaIndex, or custom service) that runs the retrieve-rerank-generate flow, and (6) an observability layer like Langfuse for traces, evals, and cost attribution. Missing any one of these creates a blind spot that usually shows up in production as a latency, cost, or quality incident.

Do I need Kubernetes for RAG, or can I just use managed services?

Managed services (Azure AI Foundry, AWS Bedrock Knowledge Bases, Vertex AI RAG Engine) are reasonable for proofs of concept and mid-market workloads without data-residency constraints. Kubernetes becomes the right answer when you need: (a) data sovereignty (GCC, regulated EU), (b) hybrid on-prem plus cloud topology, (c) custom retrieval logic the managed services don't expose, (d) cost control at volumes above ~100M tokens per month where managed markups exceed self-hosting costs, or (e) deep integration with existing Kubernetes-based platform infrastructure. Many of our clients start on managed and migrate to Kubernetes as the program matures.

How do I size a RAG cluster on Kubernetes?

Size each layer independently, because they have different scaling dimensions. Vector DB scales on collection size and query RPS, embeddings on documents-per-second during ingest and queries-per-second at serving, LLM generation on tokens-per-second and concurrency, and the orchestration layer on request count. A starting footprint that handles ~50 RAG QPS with 10M vectors is roughly: 3-peer Qdrant cluster (3 × r6i.xlarge), 2-replica TEI on g5.xlarge, LiteLLM proxy 3 × 1 vCPU, Langfuse small tier, and a 3-replica orchestration service. Budget 25,000-40,000 AED per month on EKS me-central-1 before LLM tokens.

Where should the orchestration logic actually run?

Put retrieve-rerank-generate orchestration in its own deployment - don't put it in your application layer and don't put it in the LLM gateway. The orchestration service is stateless, scales on request rate, and owns the RAG-specific logic: query rewriting, retrieval, reranking, prompt assembly, and response post-processing. Running it as a dedicated service means your application code stays thin and replaceable, and you can A/B test retrieval strategies without shipping application changes.

How do I handle RAG quality evaluation in production?

Continuous evaluation runs as an async Kubernetes CronJob or a KEDA-scaled worker against a labelled evaluation set. Langfuse and Ragas are the two common evaluation stacks; we usually deploy both - Ragas for offline metrics (faithfulness, answer relevance, context precision) and Langfuse for online human or LLM-judge evals on a sample of production traces. Budget 2-5% of LLM token spend for evaluation. Ship the results to Grafana so the quality dashboard is next to the latency and cost dashboards.

Can the full RAG stack stay in-region for GCC data sovereignty?

Yes, if you pick the right providers at each layer. Vector DB and orchestration run in your own cluster. Embeddings can be Azure OpenAI text-embedding-3 in UAE North or self-hosted TEI on in-region GPU nodes. Generation uses Azure OpenAI UAE North or Bedrock Middle East (Bahrain) as primary, with optional OpenAI-US fallback explicitly disabled for regulated workloads. Langfuse is always self-hosted in the same region. This is the stack we deploy for NESA and CBUAE-regulated clients.

Get Started for Free

We would be happy to speak with you and arrange a free consultation with our Kubernetes Expert in Dubai, UAE. 30-minute call, actionable results in days.

Talk to an Expert